Meta Has a Lot Riding on the MTIA

For some reason, recent Meta news reminds me of two songs:

The Llama Song (If you had two small children as I did in 2007, you know it.) Once upon a time, Meta had a popular LLM called Llama. Remember those old days?

(Riding on the) Metro by Berlin (80s teen here). MTIA sounds like the name of a transit agency.

In the recent Broadcom earnings call, the company indicated that Meta’s MTIA project is alive and well, and implied significant shipments would come this year. The most recent Byrne-Wheeler Report discusses Broadcom earnings and MTIA’s viability, as well as the recent AMD-Meta deal. https://www.youtube.com/watch?v=tI8ezQesJQQ (There’s a table of contents in the video’s description.) Be the first to watch the video! The 1000th viewer gets an Amazon gift card.

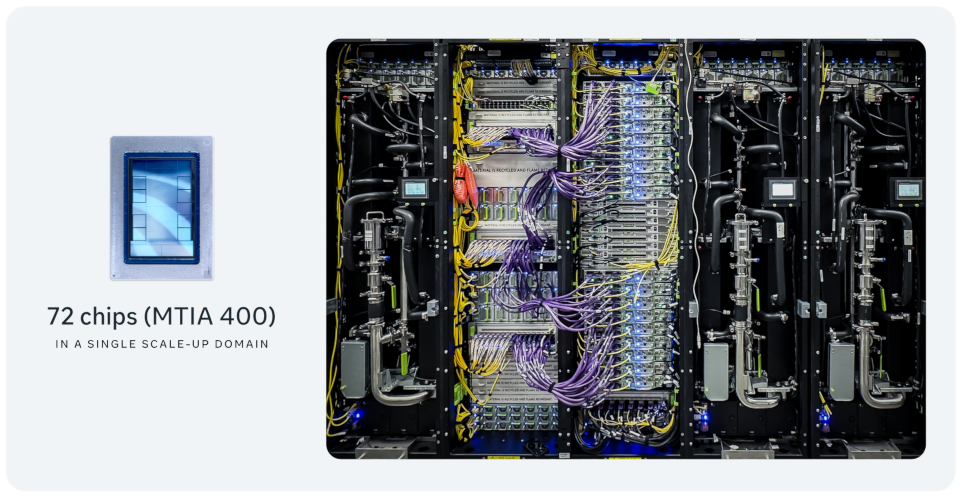

Yesterday, Meta outlined the various MTIA versions and reported that 100s of 1000s had been deployed. In any other market, that would be a lot for a chip of this scale. In data center AI, especially when compared with Meta’s commitments to AMD and Nvidia, it’s not much. After listing the MTIA 300, 400, 450, and 500, the company has stated that some are either already deployed (presumably the 300, if not also the 300) and more will be deployed in 2026 and 2027.

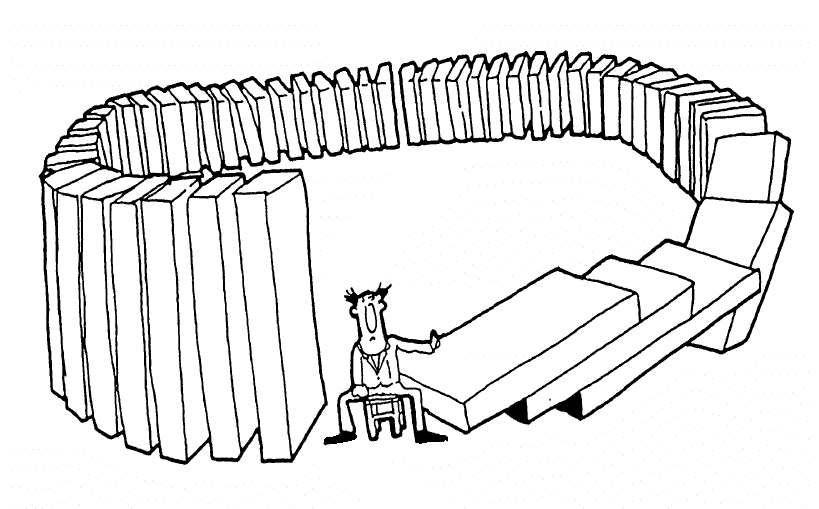

Cranking out so many versions in short order speaks to a few things: (1) Some of these must’ve been duds. (2) Meta has no reticence to invest in its own chips. (3) Despite the duds, on the whole, execution must be passable.

I can see why the company wants something in its own control and something optimizized for ranking and recommending, which lies at the core of its money-making machine. For gen AI (LLMs), it’s not clear whether its ideas are any better than those of a host of startups or how investing in MTIA is better than simply dual-sourcing from AMD and Nvidia.

Anyway, it could all come down to whether Meta can get its hands on HBM.