Google TPU Could Sell as Well as Nvidia Rubin

FT and others reported yesterday that Anthropic is committing to spend hundreds of billions of dollars on Google’s chips and cloud services to secure critical computing resources amid soaring demand for its AI tools. The company plans to use multiple gigawatts of Google’s TPU capacity, rivaling Nvidia’s GPUs, with about 3.5GW coming through a partnership with Broadcom starting next year. This deal will provide Anthropic nearly 5GW of new computing power, a capacity costing tens of billions of dollars. Broadcom will also supply custom TPUs for Google through 2031.

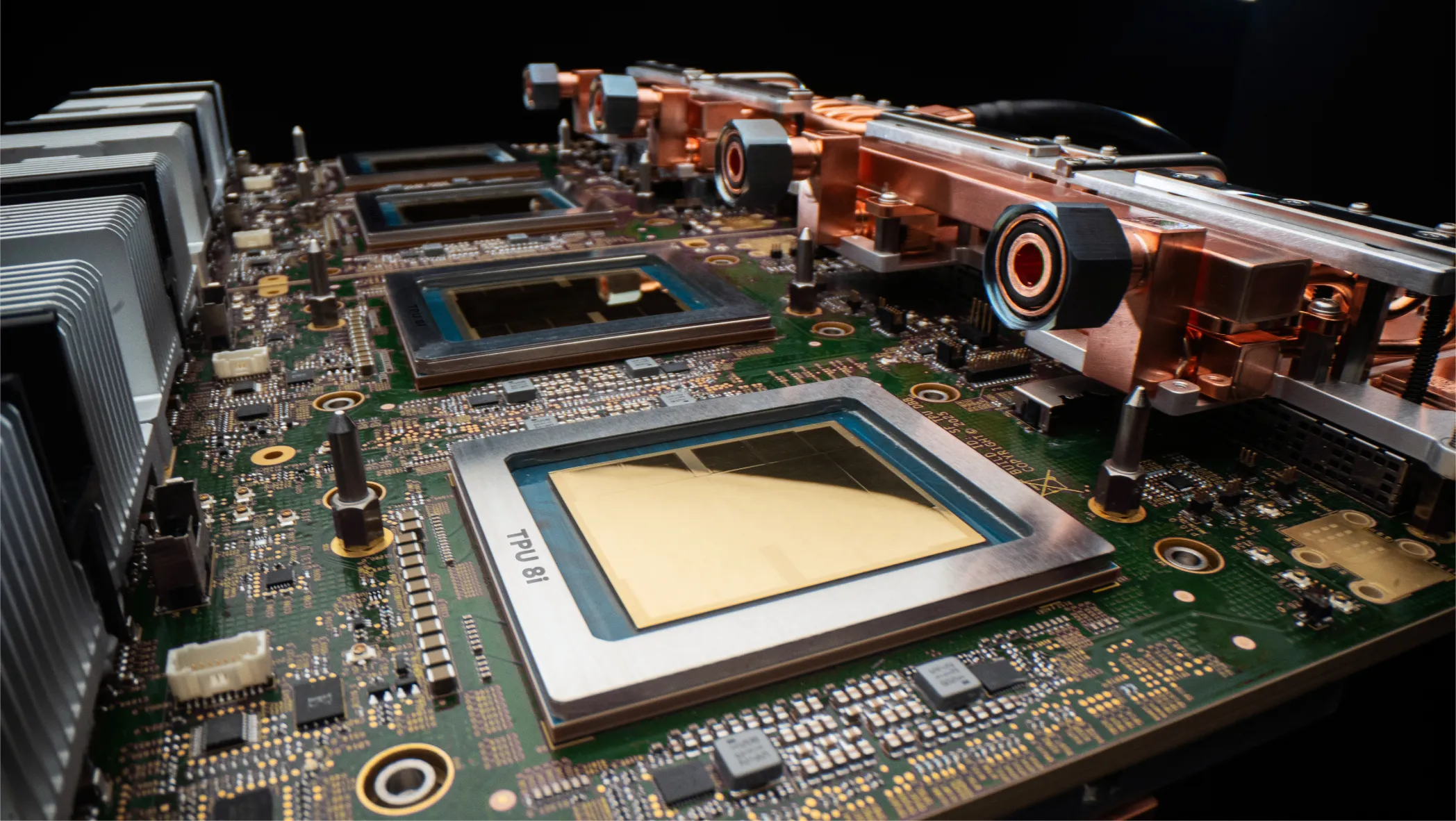

For each TPU generation (since v4?) Google has had big TPUs for training and smaller configs for inference. The company previously disclosed that for the forthcoming v8 it would work with Broadcom for the training chip and MediaTek for the inference one. MediaTek has impressed me with its ability to cram more functionality in less die area than rivals.

FT link: https://www.ft.com/content/28757ce7-0d9f-4ffb-bb91-16dc83f2cf6a?syn-25a6b1a6=1