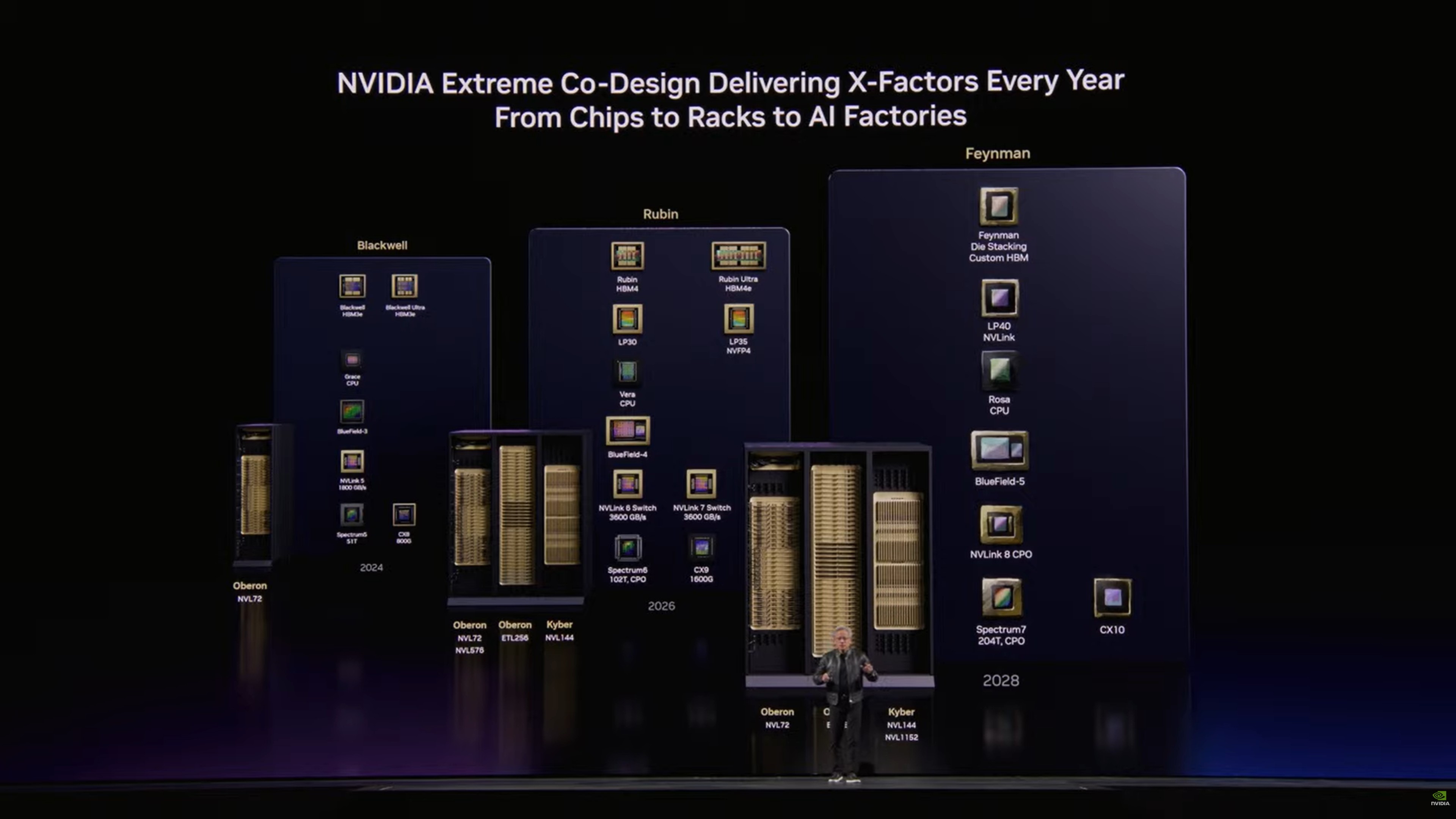

FT Also Reports Nvidia Will Announce a Groq-Based Chip at GTC

FT is reporting/speculating that Nvidia will announce at GTC an inference-specific chip based on Groq technology to complement Rubin. It's not the first to have said this.

I'm not sure there's a "need" per se for heterogeneous data-center AI-processing approaches. Instead:

* For better or worse, training has been tied almost exclusively to GPUs, specifically those from Nvidia. (Google being a notable exception, although I'm confident their devs use a lot of GPUs, too.)

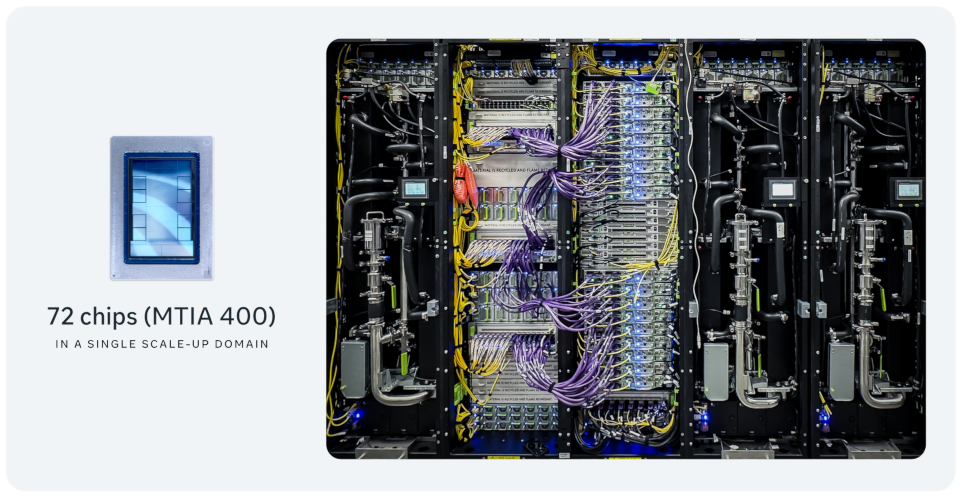

* GPUs leave a lot of room for architectural improvement, despite their advances (e.g., tensor units).

* There's a huge need to address HBM consumption. So much so that a solution not as good as a GPU that doesn't use HBM will be preferred.

* Energy costs and power provision will eventually be a limiter, so a more efficient alt to GPUs will be required.

Challenges for Nvidia:

* Avoiding the traps exemplified by New Coke, Osborne, and Itanium.

* On the other side, avoiding the innovators' dilemma.

Overall, I'm optimistic Nvidia can address these.