AWS and Cerebras Team Up on AI Inference

Amazon Web Services is deploying Cerebras CS-3 systems in AWS data centers. Available via AWS Bedrock, the new service will offer leading open-source LLMs and Amazon’s Nova models running at the industry’s highest inference speed.

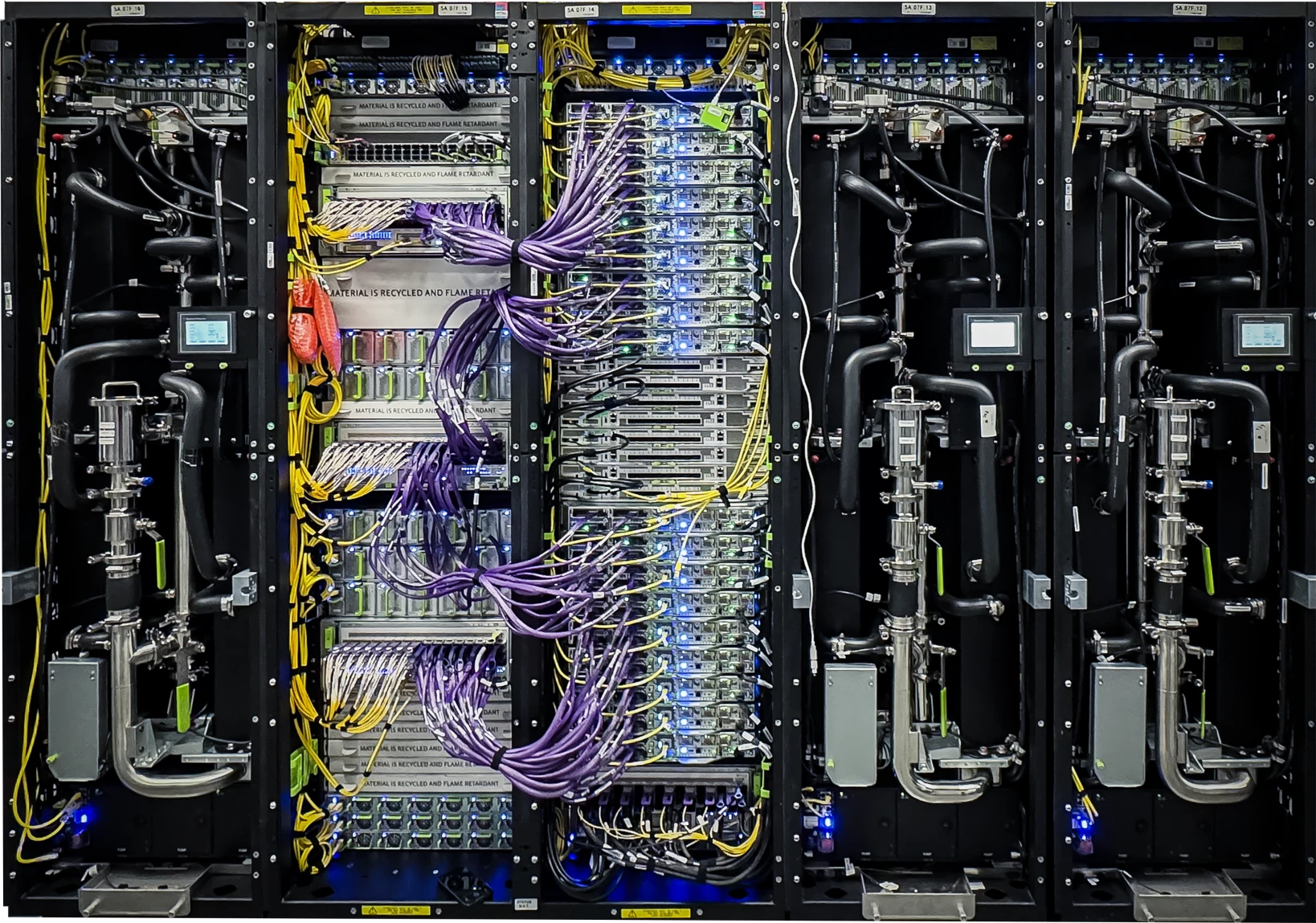

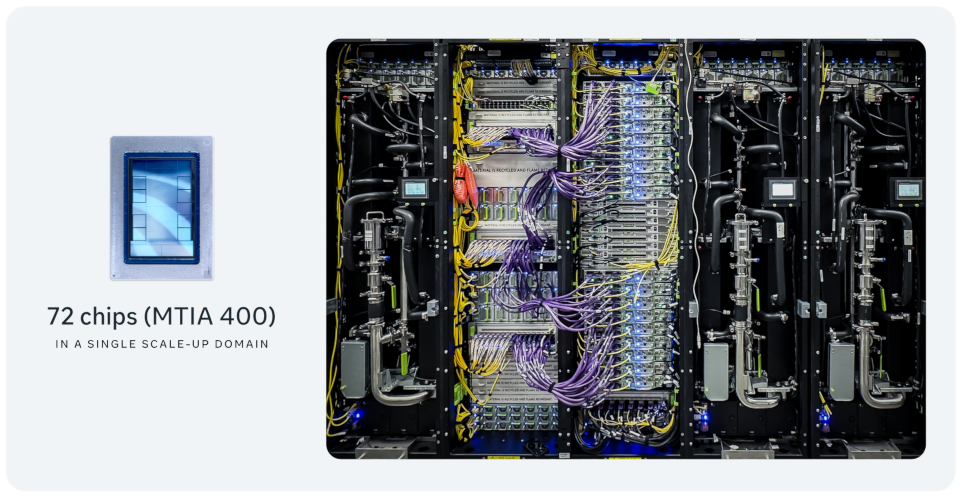

In addition, AWS and Cerebras are collaborating on a new disaggregated architecture that pairs AWS Trainium with Cerebras WSE to deliver 5x more high-speed token capacity in the same hardware footprint. In disaggregated mode, Trainium focuses exclusively on prefill work. It computes the KV cache and sends it to the WSE via Amazon's high-speed EFA interconnect.

The Cerebras WSE takes the result and exclusively performs decode, generating thousands of output tokens per second versus hundreds on GPUs. This architecture takes advantage of the best that each processor has to offer, and gives AWS customers a 5x boost in high-speed token volume.

This is just the latest example of companies implementing push-pull/master-slave/producer-consumer heterogeneous AI processing and dividing LLMs into prefill and decoding phases. To date, deployments have relied on a single XPU architecture, which works if operators tolerate either temporal bandwidth/compute-unit starvation or overprovisioning.

This particular deal is another important win for Cerebras and positions it for an IPO again. It's been two years since WS3 launched. The company's unique wafer-scale approach confers latency benefits but introduces system-design constraints and appears to limit model and context sizes.